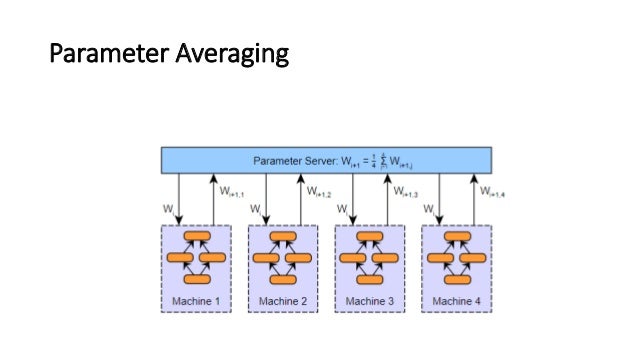

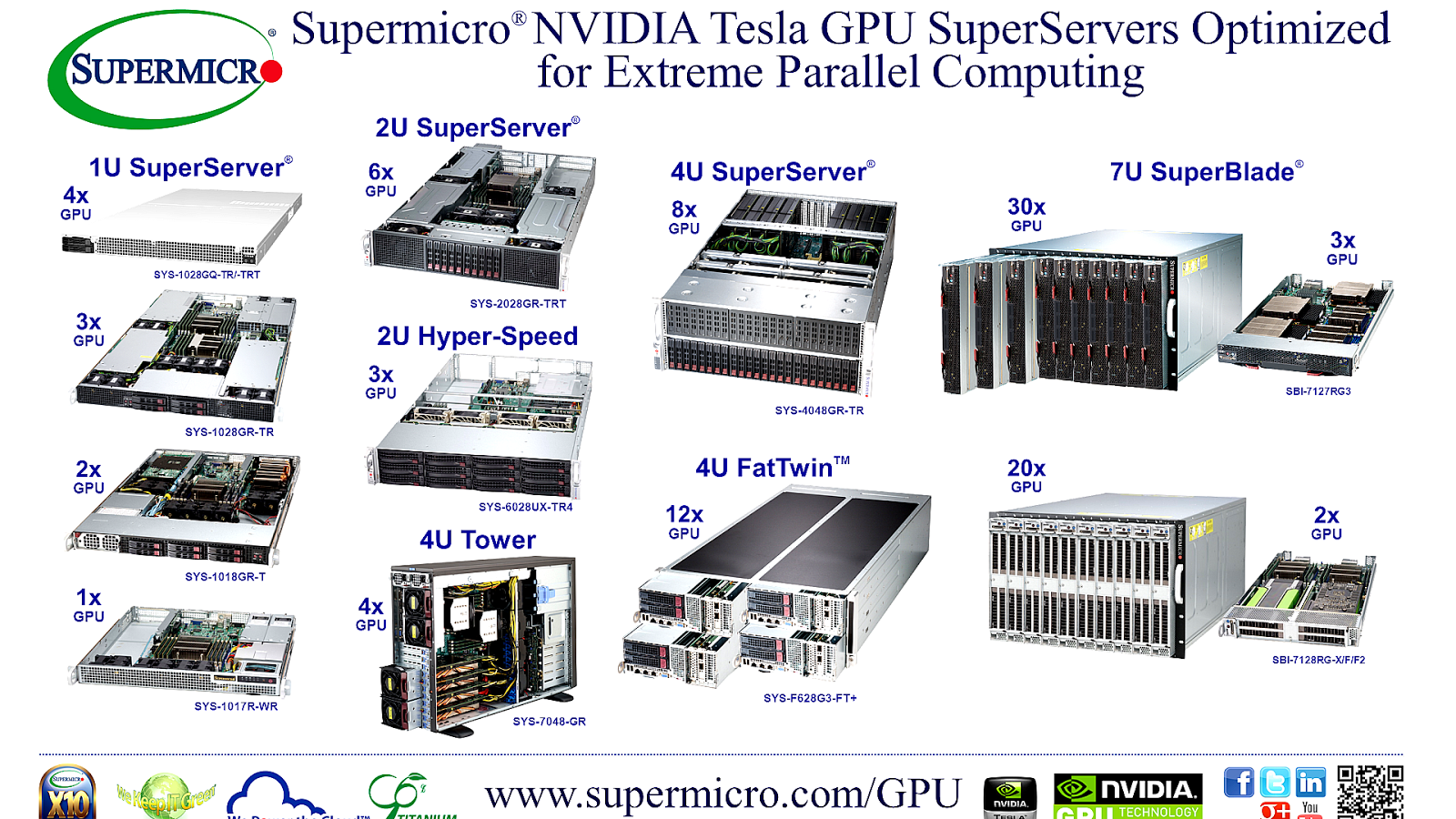

It is because very large data sets need the servers to communicate speedily with storage components and with each other. If the data set size is very large then Infiniband should get used that enables the distributed training. If the size of the data set is large, then the selected GPU should work efficiently on multiple GPU training. Data Parallelism: It depends upon the size of data being used and processed by the algorithm.The scale-up of algorithms has below three affecting factors while using across multiple The license requirements might also need the transition into the production-supported GPUs. According to the licensing updates, CUDA software has restrictions on usage for consumer GPU. For example, some chips in data centers are not allowed to get used, according to NVIDIA’s guidance. The license requirements for different GPUs also vary. To exemplify, NVIDIA GPU supports most of the common frameworks and Machine Learning libraries, such as TensorFlow and PyTorch. Thus, your selection of GPU depends upon the kind of Machine Learning libraries you are using. The different Machine Learning libraries support different GPUs. It is necessary to understand the different libraries that a specific GPU supports. As an example, NVlink connects GPUs within the server, while Infiniband connects multi GPUs to different servers. Interconnecting GPUs does not support consumer GPUs. These interconnecting GPUs also decide whether multiple GPUs can get used and the different distribution strategies that can be used. The interconnecting GPUs directly affect the scalability of the project. GPU should be able to support the project in clustering and integration.ĭifferent factors that you need to consider while choosing the best GPU for your Deep Learning project: The GPU should get selected because the project will run for a long time. The selection of GPU depends upon the performance required by the Deep Learning project. Performance: The focus of the CPU is on low latency, while GPU focuses on high throughput.Instruction processing: CPU is more suited for serial instruction processing, while GPU is more suited for parallel instruction processing.Cores: The CPU has few powerful and complex cores present in minute size, while GPU has a comparatively weaker but simple core.It means the model may execute in hours while using GPU that might take days to execute with CPU. Speed: The speed of the GPU is much faster as compared to the CPU.Memory: The memory requirement of the CPU is more as compared to the GPU memory requirements.Below are the differences between a CPU and a GPU:

It is the main difference between the two that GPU performs much faster as compared to the CPU. The execution that a GPU performs also gets done by a CPU, but the CPU is slower as compared to GPU. It made the researchers identify the real potential of GPU over CPU. It can help writing programs to process graphic data in a high-level language. Later, NVIDIA came into existence, which has CUDA as a high-level language. But implementing the first algorithm on GPU made researchers realize that GPU is faster. GPGPUs were started to be used for scientific computing problems around 2001 by implementing matrix multiplication. GPGPUs were developed to get something that can process the graphic or image data better but later found it fit for computing the scientific data as the processing of image or graphics data involves the use of matrices. GPGPU can analyze graphic data or image data. Looking at GPU development’s brief history, GPUs and CPUs form the GPGPU (General Purpose Graphics Processing Unit) when set up parallelly. It is a known term among data scientists who struggle to get high-performing code.

GPU usage is gaining popularity these days, specifically in the field of Deep Learning.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed